Collecting Contacts from LinkedIn Using linkedin_crawl

Linkedin_crawl is a module for the recon-ng framework that can be used for collecting employee names and titles from a specified company on LinkedIn. It operates by spidering through the “People also Viewed” pane that’s available on most LinkedIn user public pages, and scraping user data. That information can be used to generate a list of emails for phishing campaigns, or usernames for online dictionary attacks executed during internal/external penetration tests.

Install

Since Linkedin_crawl is part of the Recon-ng framework a simple

git clone https://LaNMaSteR53@bitbucket.org/LaNMaSteR53/recon-ng.git

should do the trick. For more information follow the usage guide here.

Usage

*examples are edited for anonymity*

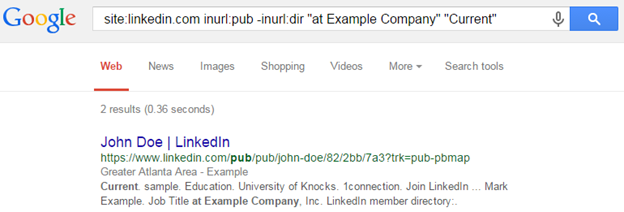

1. A seed employee for the targeted company must be identified. This is pretty easy with Google, search “company name Linkedin.” Or use this Google dork by Tim Tomes:

site:linkedin.com inurl:pub -inurl:dir “at ” “Current”

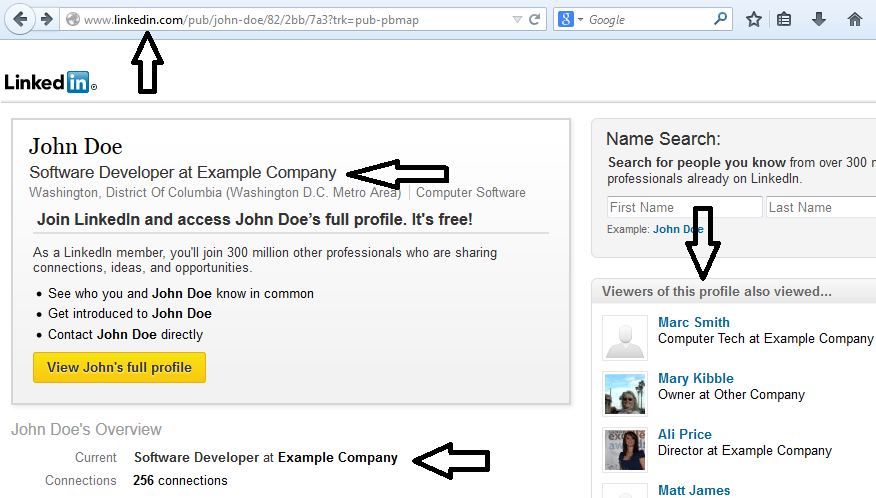

2. This seed employee should have the name of the targeted company spelled correctly and the “Viewers of this profile also viewed…” section should exist. Copy this employee’s URL. In the example below, we will be using a seed page for John Doe from the “Example Company”.

3. Load up the Recon-ng framework and navigate to the linkedin_crawl module, set the options and run.

root@kali:~/recon-ng# ./recon-ng

[recon-ng][default] > use recon/companies-contacts/linkedin_crawl

[recon-ng][default][linkedin_crawl] > show options

Name Current Value Req Description

------- ------------- --- -----------

COMPANY no override the company name harvested...

URL yes public LinkedIn profile URL (seed)

[recon-ng][default][linkedin_crawl] > set URL https://www.linkedin.com/pub...

URL => https://www.linkedin.com/pub/john-doe/82/2bb/7a3?trk=pub-pbmap

[recon-ng][default][linkedin_crawl] > show options

Name Current Value Req Description

------- ------------- --- -----------

COMPANY no override the company...;

URL https://www.linkedin.com/pub... yes public linkedin profile...

[recon-ng][default][linkedin_crawl] > run

---------------

EXAMPLE COMPANY

---------------

[*] Parsing ‘https://www.linkedin.com/pub/john-doe...

[*] Added: John Doe, Software Developer at Example Company(Washington...

[*] Parsing ‘https://www.linkedin.com/pub/ali-price...

[*] Added: Ali Price, Director at Example Company

[*] Parsing ‘https://www.linkedin.com/pub/mary-kibble...

[*] Parsing ‘https://www.linkedin.com/pub/matt-james...

[*] Added: Matt James, Director of Software Services at Example Company...

Expected Results

The module will begin crawling contacts from the “Viewers of this profile also viewed…” section and scrape their information if they are part of the company found on the seed page. If the company is small, it will not find many contacts and the module will only take about 30 seconds to run. If it is a large company, it could find thousands of contacts and the module could take hours to run. Regardless, it should be working and collecting contacts from the targeted company. When the module finally finishes view the contacts in the database.

[recon-ng][default] > show contacts +---------------------..--------------------------------------------------------------+ | rowid | first_name | | last_name | email | title | +---------------------..--------------------------------------------------------------+ | 1 | Ali | | Price | | Director at Example Company | | 2 | John | | Doe | | Software Developer at Example Company | | 3 | Marc | | Smith | | Computer Tech at Example Company | | 4 | Matt | | James | | Director at Example Company | | 6 | Robert | | Fiker | | Floor Manager at Example Company | | 5 | Tina | | Beard | | Marketing Consultant at Example Company | +---------------------..--------------------------------------------------------------+ [*] 6 rows returned

This shows a nice list of names, titles, and regions which could be helpful for a social engineering type campaign or for generating different possible username dictionaries. The recon-ng framework also has plenty of other modules to mangle the contacts or export them to another format, which I find useful.

Conclusion

Hopefully this short intro was helpful getting you started using this tool for all of your contact gathering needs. This being part of a community framework please feel free to contribute fixes or features, and thanks to those who already have!

Explore more blog posts

Navigating Cybersecurity Regulations Across Financial Services

Learn about five areas businesses should consider to help navigate cybersecurity regulations, such as the Digital Operations Resiliency Act (DORA).

A New Era of Proactive Security Begins: The Evolution of NetSPI

Introducing The NetSPI Platform, the proactive security solution used to discover, prioritize, and remediate the most important security vulnerabilities. Plus, get a first look at NetSPI’s updated brand!

Penetration Testing: What is it?

Learn about 15 types of penetration testing, how pentesting is done, and how to choose a penetration testing company.